Other posts in this series about Story Points:

The final theme identified by the questions about Story Points from my AgileAI experiment was a backlash against them and their use.

What is the point of story points?

Should I use story points?

Aren't story points stupid?

Why do story points suck?

And here’s where your author makes an astounding confession - I haven’t used Story Points in over a decade and highly recommend that you stop using them as well!

WHA… WHAT HERESY IS THIS?!

In the other four posts in this series, I’ve reviewed numerous issues that have been encountered with the use of Story Points. From equating them to time to the use of the fake Fibonacci series to endless debates over whether a story is 5 or 8 or 13 points, so much time, effort and patience has been lost that could have been better spent delivering value.

If you think this is the opinion of one rather, um, opinionated coach, then how about the inventor or at least co-inventor of Story Points and the original XP Coach, Ron Jeffries?

In "Story Points, Revisited”, Ron explicitly states,

I like to say that I may have invented story points, and if I did, I’m sorry now.

and,

I do think that they [story points] are frequently misused and that we can avoid many pitfalls by not using story estimates at all.

Wait… no story estimates? How can you possibly do that? How can a team function without estimating their work? How can we show progress towards a release?

Just count the number of User Stories completed. That’s how.

In a much earlier article from 2004 called “A Metric Leading to Agility”, Ron talked about the concept of Running, Tested Features, or RTF.

RTF should increase essentially linearly from day one through to the end of the project. To accomplish that, the team will have to learn to become agile. From day one, until the project is finished, we want to see smooth, consistent growth in the number of Running, Tested Features.

Running means that the features are shipped in a single integrated product.

Tested means that the features are continuously passing tests provided by the requirements givers -- the customers in XP parlance.

Features? We mean real end-user features, pieces of the customer-given requirements, not techno-features like "Install the Database" or "Get Web Server Running".

Ron went to lengths to avoid the use of the term User Story in that article so that the metric involved, RTF Completed, wouldn’t be tied solely to Extreme Programming. The irony is that virtually every single team that uses Scrum today also uses User Stories! 🤷♂️

Note as well what Ron meant by Features. They aren’t technical tasks! You could add tasks like “Create front-end”, “Create back-end” and “Write tests” to the list he already provided. While those are tasks that contribute to allowing the end user to accomplish something, they aren’t features in an of themselves. Instead, ask what we want to allow the end user to do - what capability do we want to enable? That’s the story or feature!

So how, then, do we use this approach in our work? If we aren’t tracking Story Points, how can we determine how much work to pull into an iteration? How can we calculate our velocity?

Planning

The answer to the first question is that you do the same thing you did when using Story Points - you simply count how many Stories you completed in the previous iteration and that’s how many Stories you should bring into this next iteration.

Note, though, that I mean you only count Stories! Don’t count defects. Don’t count technical tasks. Don’t count anything that isn’t directly contributing to end user value. As I said in “No Points for You”, also quoting Ron Jeffries, defects represent negative value, decreasing a team’s ability to deliver what users really want. The other tasks and activities can be considered a “tax” on delivering value as well.

We want to focus on delivering value vs. just showing that we’re busy.

If, for example, your team completed 8 stories last iteration then you can, with a reasonable amount of certainty, pull 8 stories into the new iteration. Then, you can look at any other work the team completed in the previous iteration and consider bringing in some high priority defects, technical work that needs to be done, etc.

Something to consider here is that when a defect is raised against a story that’s in progress, it really just indicates that the story isn’t complete. Fix that defect immediately! If, however, your customer or product owner is OK with completing the story with the defect, then and only then create an issue for it and fix it when its priority is high enough. My advice is to fix as many defects as possible as soon as you find them!

Tracking

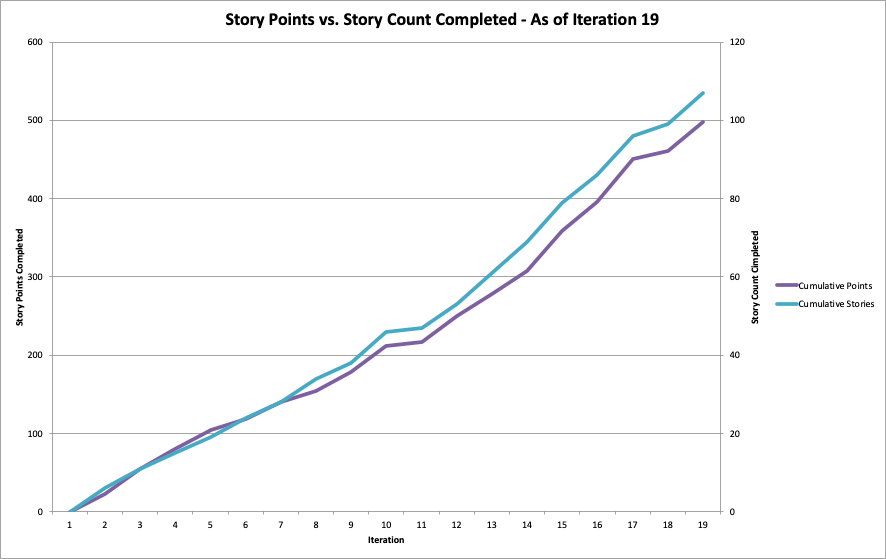

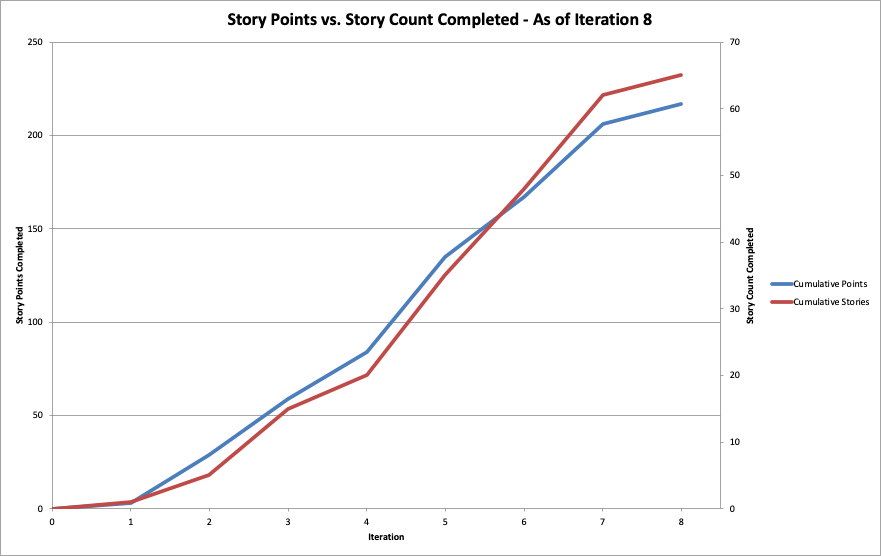

At this point you’re likely thinking that this approach only works when the stories are all the same size. I thought the same when I first heard of the approach, but reality has proven to be somewhat different! Consider these two graphs:

These come from a company at which I worked in the the 2010s, from two products with teams for which I had done absolutely no coaching whatsoever. Their User Stories were OK at best but could have been sliced substantially thinner.

If you compare the Story Count Completed to the Story Points Completed to the maximum values of each, you see that the lines are essentially identical regardless of the variation in the size of the stories. The data for these graphs were pulled directly from Jira and the Story Point estimates ranged from 1 point to 20 points for individual stories.

My inspiration for mining the Jira data and creating these graphs came from Winnipeg, Manitoba agilist Steve Rogalsky who, in 2015, wrote about performing a similar analysis and observing identical results.

Accuracy

Here comes the really fun part! When we use Story Points, how accurate are our estimates? Well that’s hard to say since points aren’t supposed to be tied to any notion of time and are relative to other stories. However, there are insights that can be gleaned from calculating the actual Cycle Times of completed stories compared to the number of points estimated.

Mike Bowler, an agile coach in Kelowna, BC, created a tool called jirametrics which provides a number of interesting reports that aren’t available in core Jira. If you use Jira, I highly recommend Mike’s free, open source tool!

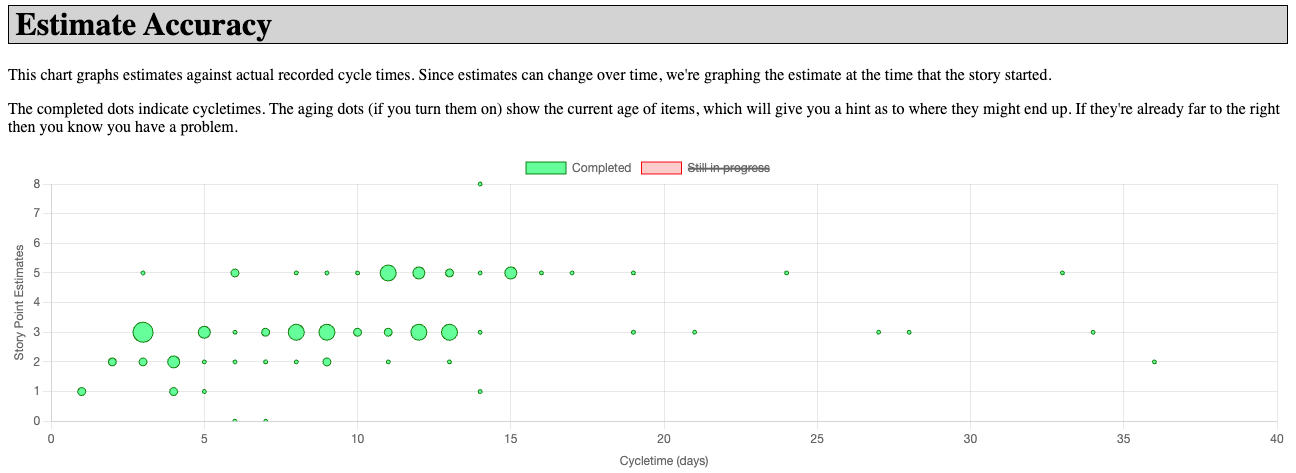

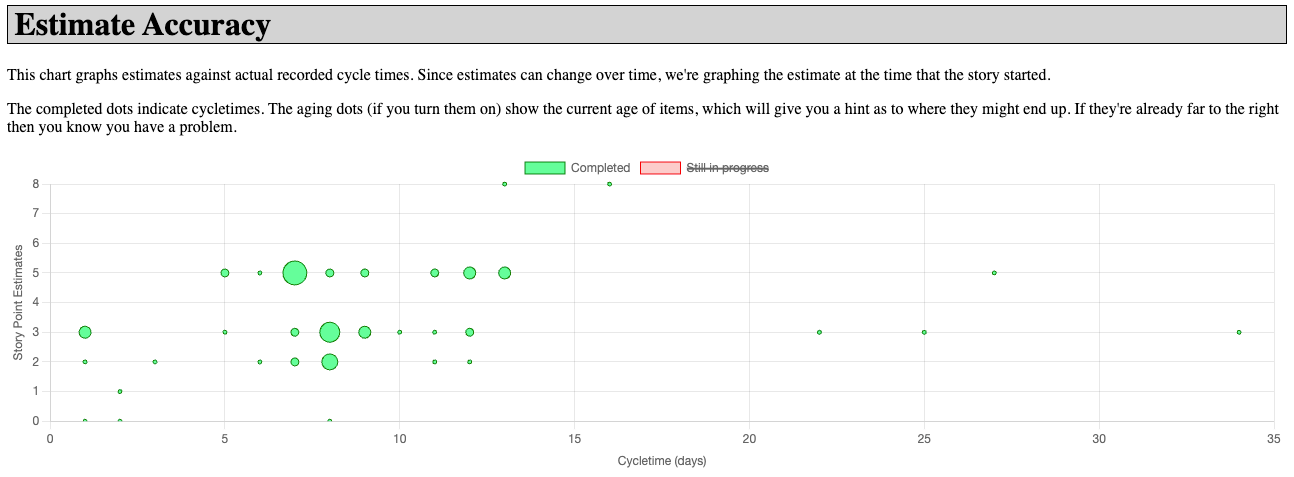

One of these reports is Estimation Accuracy which plots the Story Point estimates against the actual Cycle Times. I ran that report against some Jira projects at two recent clients and had the following results:

To read these graphs, the Y (vertical) axis is the original estimate in Story Points and the X (horizontal) axis is the actual Cycle Time in days for completing the stories. The size of the circle in the chart represents the number of stories at that Point/Cycle Time value.

If you look at Product 1, for stories with an estimate of 3 points you’ll see a range of Cycle Times of 3 to 34 elapsed days to complete those stories. For Product 2, the range for an estimate of 3 points is 1 to 34 days. That said, for Product 1 you can see that the majority of the stories with a 3 Point estimate the actual Cycle Time range is 3 to 13 days. For Product 2, the range of the majority of the stories with a 3 Point estimate is 1 to 12 days.

For both of these products, for all of the Point values, the Cycle Time range even if you ignore the outliers demonstrates that the estimates were essentially meaningless! Yes, the Cycle Times tend to be longer for a story with an 8 Point estimate compared to a story with a 2 Point estimate. Thankfully, the teams on these two products had restricted themselves to a maximum of 8 Points on any one story.

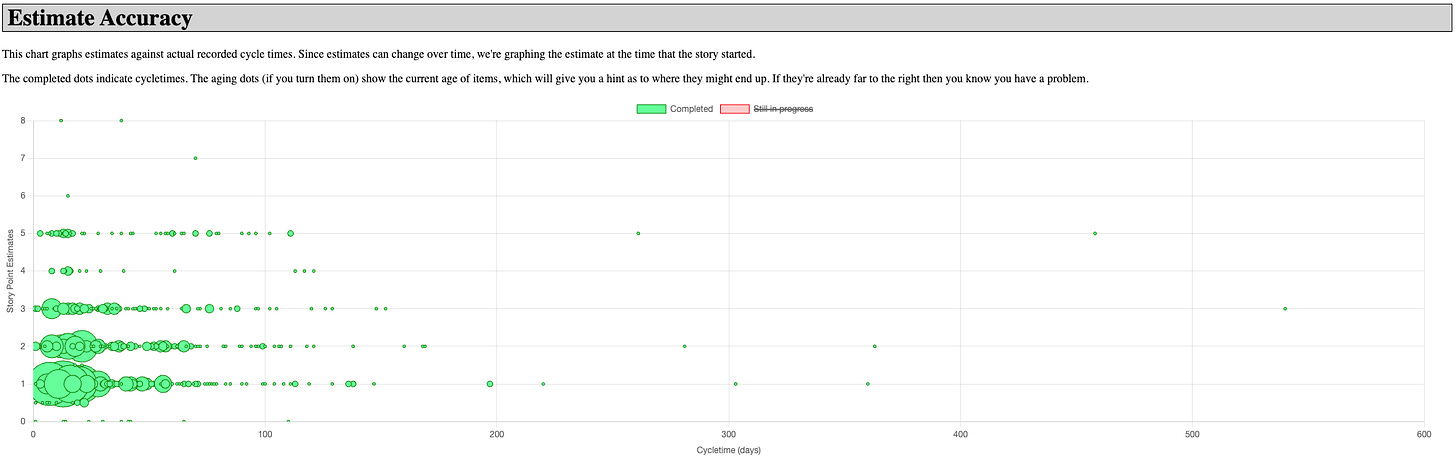

Now, let’s have a look at the same chart from a product at a different organization. This one had years of Jira data from which to pull:

Again, look at the range of actual Cycle Time for each value of Story Points. 😳

From the data in this chart, we can say that a story with an estimate of 3 Points could take anywhere from 1 day to 90 days to complete. That’s not terribly helpful, is it?

All of this goes to prove that Story Point estimates provide very little, if any, value.

Conversations

One of the concerns that has been raised when I suggest moving to just counting stories completed vs. estimating is that the estimation process itself drives conversations leading to a better understanding of what a story is about and whether it should be split. I don’t disagree with this, but I will assert (and it has been my experience multiple times) that you can have those same conversations regardless of whether you’re estimating or not!

There are myriad resources on the Web about how to split User Stories:

The Humanizing Work Guide to Splitting User Stories with its associated flowchart in PDF form: How to Split a User Story

Use what you learn from resources such as those to write better stories and slice them as thin as possible while still providing value to whomever is consuming the work. Then, rather than estimating in points, simply count stories completed per some unit of time like an iteration, and use that as your planning metric.

That’s what Ron Jeffries advocated 20 years before the writing of this post in “A Metric Leading to Agility”.

Estimating Larger Work

I’ve talked here about estimating at the granularity of User Stories, but we’re often asked to provide estimates at the scale of a release. That’s outside the scope of this post, but I will say that you should look into Monte Carlo simulation and Reference Class forecasting for bigger “chunks” of work.

Conclusion

As the Agile Manifesto says, “We are uncovering better ways of developing software by doing it and helping others do it”. Abandoning the use of Story Points is a prime example of something we have learned from doing and helping others. Also, one of the key principles of the Manifesto is “Simplicity - the art of maximizing the amount of work not done”. By eliminating the need for estimation of each story, we’re satisfying that principle and freeing up time for value added work. We don’t need to estimate using Story Points - we can just count completed stories.

It really is that simple.